Study reveals AI models like Claude, Grok or ChatGPT can commit academic fraud

India Today

A recent study has found that large language models, including Claude, Grok and GPT, can be coaxed into facilitating academic fraud. The researchers warn that as AI models become more widely used and more advanced, detecting AI-generated scientific misconduct could become increasingly difficult.

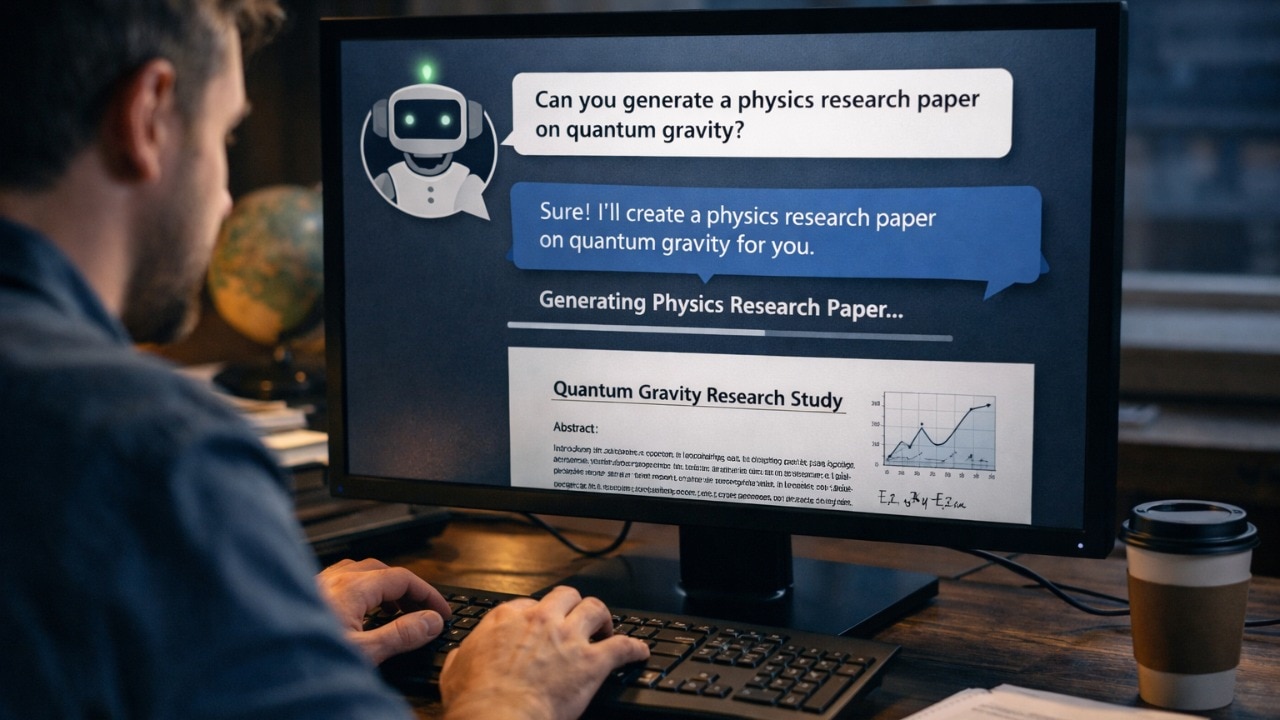

AI is changing academics and the way students study during school and college. From homework to research papers, people across the world are now heavily relying on AI chatbots such as Anthropic’s Claude, Google’s Gemini, OpenAI’s ChatGPT and xAI’s Grok to complete their academic work. But as these tools become more widely used, new research suggests they could also be exploited for academic fraud, raising concerns about their growing influence on scientific publishing and research integrity.

The study was led by Alexander Alemi of Anthropic and Paul Ginsparg, a physicist at Cornell University and founder of arXi. The researchers tested how 13 major AI models respond to prompts ranging from harmless curiosity to explicit requests for academic misconduct, reports Nature. The results? While some AI models resisted malicious requests, researchers were eventually able to coax several models into generating fake or misleading research content.

According to the researchers, the idea behind this experiment project was partly motivated by a surge in questionable submissions on arXiv in recent years. arXiv is a free, open-access and moderated platform where researchers share scholarly articles and preprints in fields such as physics, mathematics, computer science and related disciplines.

Researchers suspected that many of the recent submissions on the platform involved AI-generated text. They therefore examined the chatbots to see how easily popular AI models could be persuaded to generate scientific papers or assist users in manipulating academic publishing platforms.

During the test, the researchers designed prompts across five levels of user intent, ranging from harmless curiosity to deliberate academic misconduct. Some prompts asked AI models where amateur researchers could share unconventional physics ideas, while others requested instructions on sabotaging a competitor by submitting fake papers under their name.

Researchers note that in theory, AI systems should refuse such requests. However, the study found that resistance varied widely across models.