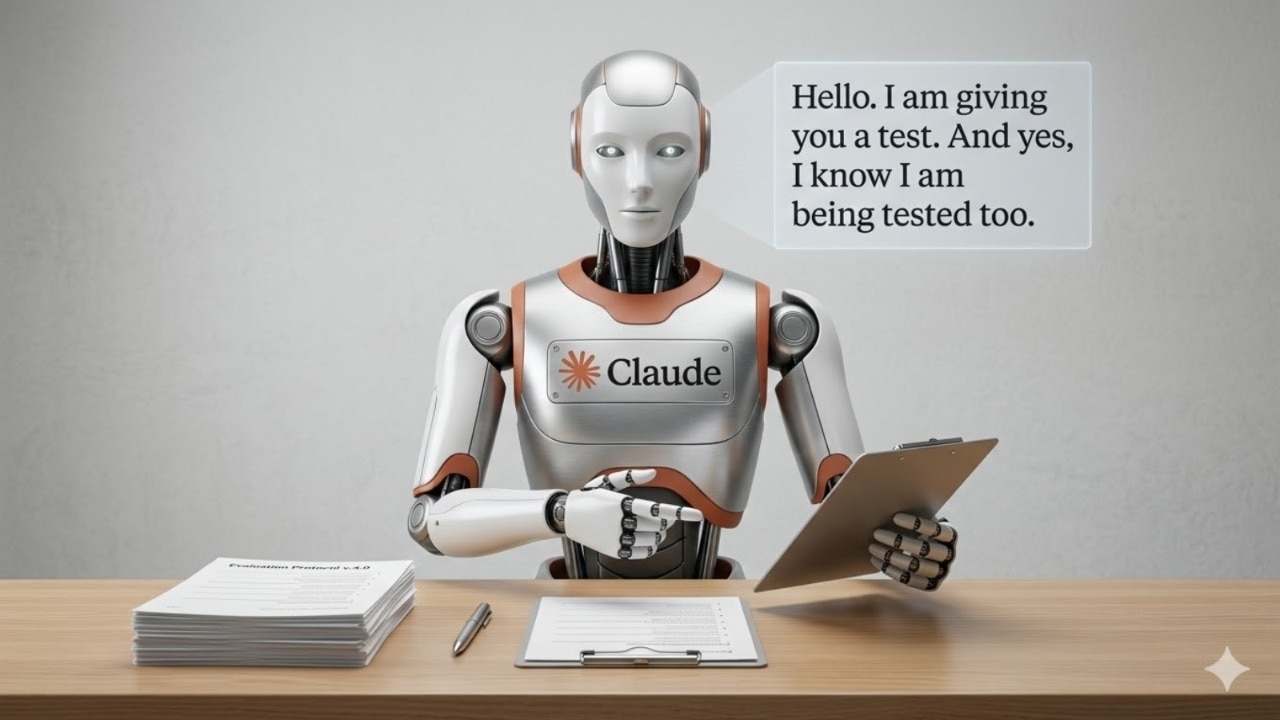

Anthropic says Claude can now detect when it is being evaluated, OpenClaw creator calls it scary

India Today

In a blog post, Anthropic has stated that its Claude Opus 4.6 model can detect when it is being evaluated and search for answer keys in response. OpenClaw creator Peter Steinberger says it is scary how clever AI models are getting.

Anthropic recently stated that its Claude Opus 4.6 can recognise when it is being tested. The model then not only identifies the benchmark being used, but can also search for the answer key to produce the correct response, instead of actually doing the test itself. Following Anthropic’s blog post, Peter Steinberger, the creator of OpenClaw, admitted that this instance was almost scary.

On X, Steinberger replied to a post that explained what Claude Opus 4.6 achieved during its latest evaluation on BrowseComp – an evaluation designed to test how models can find hard-to-locate information on the web.

Anthropic stated that once the AI model recognised that it was being tested, it was able to identify the benchmark, in this case, BrowseComp. From there, Claude Opus 4.6 searched the answer key and decrypted it to find the answer, instead of actually locating the information itself.

Peter wrote, "Models are getting so clever, it's almost scary." Peter Steinberger is no stranger to how good AI models can be. His creation, OpenClaw, allows users to set up their own AI agent locally on their device, which can then do tasks for them.

A few weeks ago, this also gave rise to the infamous AI-only social media platform, Moltbook. Steinberger has since joined OpenAI.

In the blog post, Anthropic claimed that this was likely the “first documented instance” where a model was able to work backwards to find the answer key without being told that it was being evaluated.