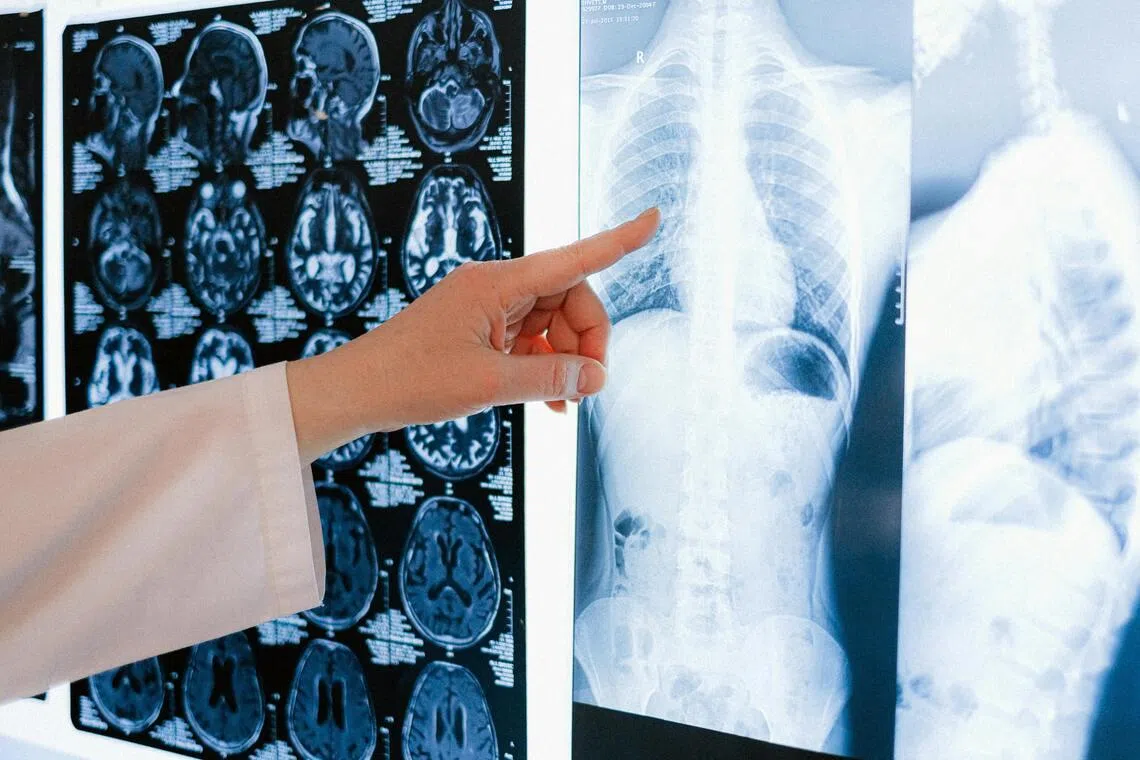

Fake X-rays created by AI fool radiologists – and even AI itself

The Straits Times

AI-generated fake X-ray images can fool radiologists and even AI itself, raising concerns about potential manipulation. Read more at straitstimes.com.

NEW YORK – Fake X-ray images created by artificial intelligence to resemble true results from human patients can fool not only experienced radiologists but also the AI tools themselves, according to a study that illustrates the potential for manipulation by bad actors.

Seventeen radiologists from 12 hospitals in six countries reviewed 264 X-ray images, half of which were generated by the AI tools ChatGPT or RoentGen.

When radiologist readers were unaware of the study’s true purpose, only 41 per cent spontaneously identified AI-generated images, according to a report published in Radiology.

After being informed that the dataset contained synthetic images, the radiologists’ mean accuracy in differentiating the real and synthetic X-rays rose to 75 per cent.

Having deepfake X-rays realistic enough to deceive radiologists “creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one”, study leader Mickael Tordjman of the Icahn School of Medicine at Mount Sinai in New York said in a statement.

“There is also a significant cybersecurity risk if hackers were to gain access to a hospital’s network and inject synthetic images to manipulate patient diagnoses or cause widespread clinical chaos by undermining the fundamental reliability of the digital medical record,” Dr Tordjman said.